QCon New York Day 2: Freeing people, imperfect architecture, lean coffee and unanswered questions

That was the weirdest conference party ever. No music, no dancing, just free food and beer. Well, our of me, two Austrian guys and a fellow from Dakota had plenty of great stories to share. And did I mention the free beer?

Freeing people and optimizing the tools

As any good professional would do, I also was at the conference venue on time, just like anybody else. And for once, the keynote was worth my time. Dianne Marsh, director of engineering at Netflix shared how Netflix ensures their engineers get the freedom to innovate. Their basic vision is to let free of the people and to optimize the tools. They do that by having their managers provide context rather than control the people and try to attract and retain talent. Obviously engineers have the tendency to go any which way they can, so managers are supposed to help them to have some kind of alignment.

They also should stimulate experimentation in independent paths of exploration. If you've been attending the Lean Enterprise talk from Trevor Owens you should understand why that's such a great thing to do. Next to that they heavily invests in continuous delivery. Developers can test and deploy their own code using a fully automated deployment pipeline. If this involves larger and more risky changes, they do what they call a red/black deployment or canary testing. In other words, they run the two versions side-by-side and gradually move our users from the old to new environment.

One other thing that caught my attention is how they approach software development as something that can fail at any time. That’s why they created the Chaos Monkey that will shut down random Amazon VMs to see how the system behaves and the Latency Monkey that will cause timeouts in network connections at random times. This is pretty special considering how often that day I heard references to that in architectural discussions.

In all seriousness

Michael Feathers is one of my hero speakers, not only because of his excellent book, but also because most of his talks are really inspiring. He has spoken about Conway's Law numerous times, but this was the first one where he used this law to propose organizational solutions. Roughly he said that irrespective of the greatness of your architecture and all the agile practices your applying, sooner or later you'll run into scaling issues caused by the organizational structure. I've been working at a client that grew from 8 to 120 people in about three years and Conway's Law is really visible there. It starts to get particular difficult when you can't get all the developers on the same floor. That's one of the reasons Michael has a great interest in the whole micro-services hype. Since each micro-services can be maintained by at most one team, it provides not only architectural scalability but also on the organizational level.

Embrace Imperfection

Right after Michael, I went to Eric Evans' talk on embracing imperfection. Last year I left his full-day tutorial on Domain-Driven Design because it was too boring, but this session was absolutely great. After admitting he wasn't really sure what architecture really is (!), he talked on our failed attempts to build robust and resilient systems. That's why he believes Netflix is doing such a great job. They've essentially managed to reach perfection in handling imperfection. As a long-term architect that will profoundly affect my future endeavors.

Provided that I understood him correctly, his general rule-of-thumb is that within a bounded context (BC) you should have 90% of the functionality covered by an elegant architecture. The remainder doesn't have to be so perfect, as long as the BC is aligned with the team structure. That ensures that any shortcuts that are taking are well-known within the team and won't surprise other teams. By further decoupling the BCs from each other using special interchange contexts that translate messages between BCs (aka the Anti-corruption Layer), and not sharing any resources such as databases, you can localize the imperfections. Oh, and with that statement of 'not being so perfect', he actually meant to use if-then-else constructs rather than well-designed polymorphostic abstractions!

More Eric and Michael

As if this day wasn't already great enough with individual sessions, wouldn't it be cool to have the both of them open up a panel discussion on architecture? Well, here you go. In fact, that panel was joined by Leo Gorodinski who used Greg Young's EventStore to build a system in F# and Duncan DeVore who build an Event Sourcing system in Java. Yes, you've heard that right. They were actually discussing Event Sourcing as an architecture principle in the context of micros-services at Qcon. After the session I talked a bit more about that with Leo and Duncan and they told me that Event Sourcing is only recently getting recognition outside the .NET world. Guess I should be proposing a talk on distributed occasionally connected systems using Event Sourcing for next year then. Anyway, one question that was discussed quite extensive is whether domain modeling, or more specific, aggregate boundaries are still useful while developing micro-services. I couldn't judge the later, but in the context of event sourcing I shared my experience that having proper aggregates allowed us to move from a traditional relation database system to Event Sourcing without too much of a hassle. However, I also shared that Event Sourcing is not a silver bullet and you should be prepared to the need to learn a lot of new technical and functional concepts.

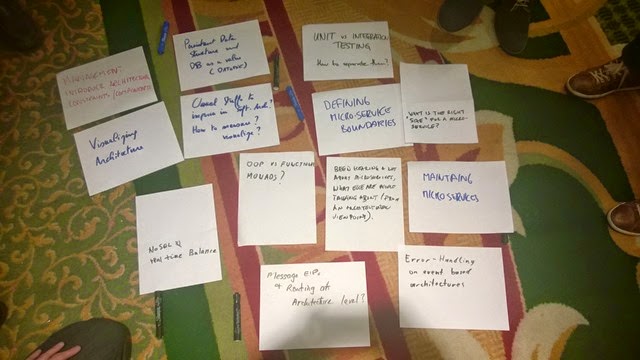

Lean Coffee

To stay within the context of the architecture panel discussion, I attended a Lean Coffee session on improving architecture. Lean Coffee is a simplified version of Open Space where people can propose topics that are openly discussed for a maximum of 5 minutes in the order defined by dot voting. I even asked Eric, Leo and Duncan to stick around for this talk because there were a lot of questions after their session.

Since 5 minutes is short, we covered several topics:

How to handle exceptions in an event-based architecture; After a short discussion we learned the attendee meant a command-based architecture. Somebody proposed to have a callback REST API that command handlers can report errors to. Others proposed to associate each command/event with a correlation ID and have some framework in place for registering errors. I myself proposed to validate the command for correctness synchronously and handle any business errors asynchronously through a centralized registry, but keeping the errors really functional.

How to compose micro-services from aggregates and how to select the right size and maintaining micro-services; Talking about awkward silence, this was one. Nobody in the group had the experience nor got anything from the QCon sessions that could answer this. Bummer…

What else are people talking about EXCEPT micro-services; Since I'm working with an Event Sourcing architecture and this was also discussed in the previous panel discussion I shared some of our trials and tribulations of moving from a traditional architecture to ES.

Usual stuff you do to improve architecture and how to visualize and/or measure this; Most of us mentioned the SOLID principles, reducing technical debt, pair programming and code reviews, but we couldn't entirely agree on DRY. Some of us thought DRY within a component or bounded context is important but outside that boundary is not too important. This especially aligns well with Conway's Law because preventing duplication between teams is a lot more difficult or even undesirable. However, not everybody agreed with that.

Six questions on micro-services I hoped to get answered, but didn't

So after two days of architecture sessions and discussions, I still have a lot of questions unanswered…

- How to group aggregates in micro-services?

- How do you prevent a micro-services from growing too quickly and becoming macro?

- Should micro-services call eachother or should they publish events only?

- How do you version messages to be both backwards compatible as well as forwards compatible?

- How do you manage the dependencies between the services?

- How do you effectively aggregate data from multiple services to prevent N+1 calls?

Anybody??

Aviva Solutions

Aviva Solutions

Fluent Assertions

Fluent Assertions

Leave a Comment